In an interview with Protocol, Facebook gaming VP Jason Rubin suggested that cloud streamed Oculus VR is more than 5 years out.

Ultimately we’ll throw those processors in a server farm somewhere and stream to your headset. And a lot of people are going to say, “Oh my god, that’s a million years away.” It’s not a million. It’s not five. It’s somewhere between.

The Goal & Challenge Of Cloud VR

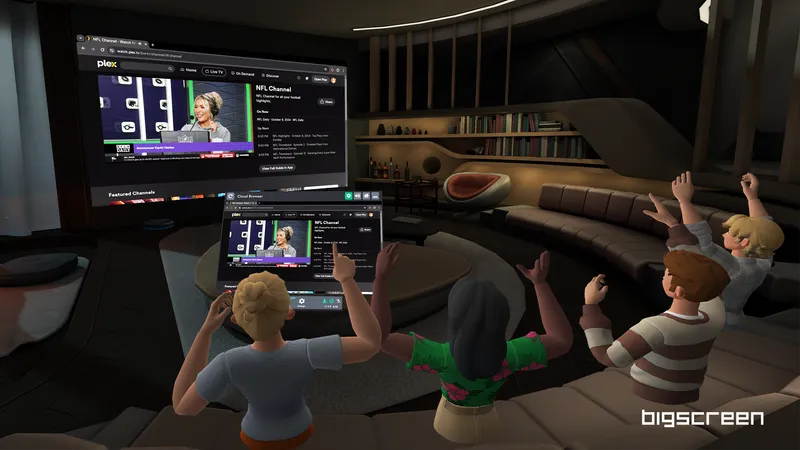

Standalone VR headsets such as Oculus Quest open up room scale VR to beyond those lucky enough to own a gaming PC. However, mobile chips are significantly less powerful than PC GPUs, limiting the amount of detail possible and the types of games these headsets can play.

Oculus Link lets the Quest act as a PC VR headset of course, but this requires a gaming PC.

Virtual Desktop wireless streaming supports cloud PCs like Shadow VR, and some are using this already to play PC VR’s biggest hits. However, the latency makes most people feel sick, and the $15/month base tier’s CPU struggles in more taxing games. NVIDIA’s CloudXR platform streamlines VR streaming for enterprise by supporting NVIDIA servers natively.

Shadow and CloudXR use the same fundamental approach as Oculus Link. When a frame is rendered, it is compressed by the GPU’s video encoder and sent to the headset, where it is decoded and displayed. Encoding and decoding takes time, and this adds latency on top of the time it takes for the frame to reach the device.

Back in December, Facebook acquired Spanish cloud gaming startup PlayGiga, which was taking a similar approach to Shadow and NVIDIA.

To reduce the apparent latency visually, the frame is warped in the direction the user’s head has rotated since the frame started rendering. None of the current systems do this for positional latency however, which is why moving around and using controllers often feels much less solid.

When using a server within the same city as the headset under ideal network conditions, these services can just about reach the kind of latency VR requires. To get latency low enough to fully trick the human perceptual system however, a different approach could be needed.

Rubin: Mobile Chips May Never Do AAA Graphics

According to Rubin, standalone headsets won’t be able to render virtual environments with AAA quality graphics “anytime soon”, due to both thermal and power limitations inherent in the form factor.

That’s not happening on a local headset, for the same reason that people can’t get those games to run on phones and anything else that’s battery powered and needs to dissipate heat. You can plug it into a wall, and you can put a liquid-cooled or fan-cooled graphics card in it, and sure. But you can’t do that on somebody’s face.

In the long run, Rubin suggested AAA-quality graphics will arrive through cloud rendering.

In the past, Rubin has spoken of the potential need for a new rendering architecture to solve this. In such a hypothetical system, the server would do the most intensive workloads to somehow make rendering fairly easy on the headset, without just crudely transferring raw frames.

If cloud VR can one day bring the power of PC to fully standalone headsets, it would represent a revolution in the value such headsets offer consumers- but as Rubin warns, don’t expect that any time soon.