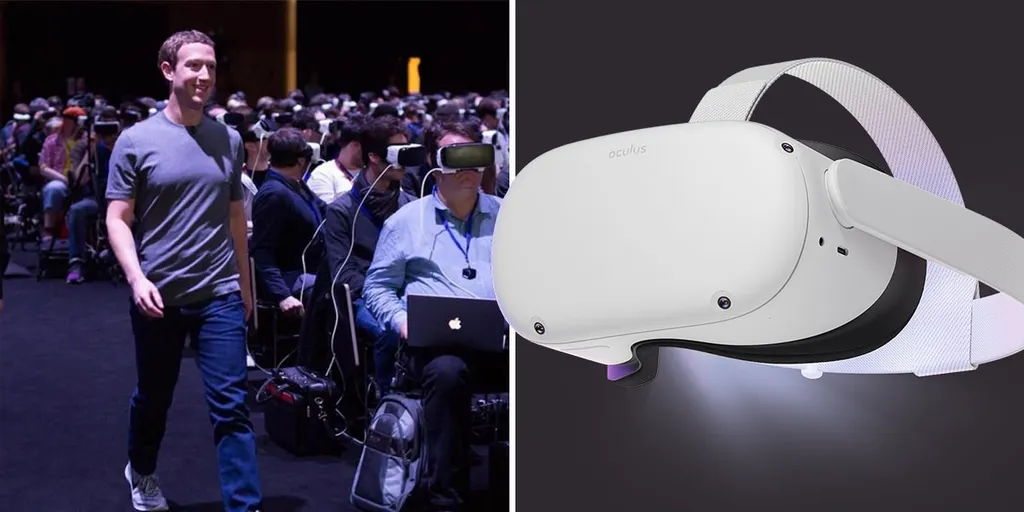

The laws governing the use of VR/AR technology aren’t necessarily the most exciting subject to most people, at least not compared to the adrenaline racing through the veins of anyone playing Resident Evil 4 on Oculus Quest 2.

In the coming years, though, free speech and safety may be on a bit of a collision course as online platforms move from 2D screens and out into the physical world. Ellysse Dick, a policy analyst from the Information Technology and Innovation Foundation (ITIF), recently organized and moderated a conference with the XR Association on AR/VR Policy. It’s a lot to take in and you can check out the five-hour event in a recorded video covering a wide range of the issues at hand. For a more abbreviated discussion, though, I invited the policy expert into our virtual studio to talk about the likely changes in store as this technology marches into mainstream use.

“There’s going to be growing pains,” Dick said. “The way we’re going to figure out what people think is too creepy and what is within limits is probably not going to be a nice little pros and cons list that we all follow. People are going to be freaked out. And in many cases, justifiably so, especially if their children are involved or their private lives are involved.”

Check out the roughly 28-minute discussion in the video below or follow along with the transcription posted below the video.

Free Speech In VR/AR? A Conversation With ITIF Analyst Ellysse Dick

[00:00] Ian Hamilton: Thank you so much for joining us today. Ellysse Dick, you’re an expert in privacy and policy, can you explain to us what you do and where you work and what some of the major issues are that you’re thinking about these days?

[00:11] Ellysse Dick: I’m a policy analyst at the Information Technology And Innovation Foundation. We are a tech and science policy think tank in Washington, DC, and we cover a wide range of science and technology policy issues. So my specific area is AR/VR. I lead our AR/VR work stream, and that means I cover really everything from privacy and safety to how government can use these technologies, how we can be implementing them across sectors. A lot of my work obviously is in the privacy and safety realm because it seems like that’s the first thing we have to get right if we can actually start thinking about how policy will impact this tech.

[00:49] Ian Hamilton: So I think a lot of brains might turn off out there when they hear policy talked about, but if we wanted to get to those people right at the start of this interview, what are the things that you think need to change about policy regarding AR and VR and this entire technology stack?

[01:07] Ellysse Dick: I think when people think of policy, the first thing they think of is like Congress making laws, right? But what we’re actually talking about is all the range of how government can support innovation in this field. So this includes even things like government funding for different applications and different use cases. We actually just had a conference where we had a conversation about this, you know, the National Science Foundation does a lot of work in funding up and coming applications for these technologies. So policy isn’t just laws that make things harder, it is how our policy makers and how our government will support this technology and make sure that it’s being used correctly going forward.

[01:46] Ian Hamilton: Are there very different sort of things in mind for AR than there are for VR, sort of like in your home technology use versus everyday out in the world technology use?

[01:59] Ellysse Dick: We do tend to clump them together when we’re talking about it broadly, just because when we’re describing the technology, especially to policy folks who might not be thinking about this everyday, like we do, but you’re right they are two very separate technologies when we’re thinking about the policy implications. Obviously, AR raises a lot more questions about privacy in the outside world, bystander privacy, what it means if we’re walking around with computers on our faces that’s a very different question than when we’re talking about VR, which on a privacy standpoint is much more biometric data capture, user privacy, and how we interact in virtual space. Also in safety, if we’re talking about AR we’re talking more about making sure that the ways the digital and physical world interact is safe and age appropriate and doesn’t involve harassment or defamation, whereas in VR when we’re talking about safety, we’re really talking the ways that humans interact in fully virtual space, which raises a lot of questions about things like free speech. How much can we actually mediate these spaces when we’re really just talking face to face in a virtual world?

[03:02] Ian Hamilton: The rumor is that Facebook’s gonna rebrand its entire company to something new. This evolution with that company of going from an organization where you upload photos of the past and then it identifies where the faces are in your photo and then you tell it, which face it is. You’re kind of training the system to understand what the faces of your friends and family look like. And then it can find those maybe in later photos and make it easy for you to tag them in the future. That’s a very early feature of the Facebook platform and when I think about wearing Ray-Ban sunglasses that let me go out into the world and then take photos or videos of people, it would not be hard to take that video process back to my machine or to my server and have every single face in that photo or video analyzed and taggable. I think that brings home how big of a shift we’re in for technologically as soon as more of these glasses are mainstream. What policy issues govern exactly what I just described?

[04:11] Ellysse Dick: So that’s a great question. I actually wrote a little bit about this when the Ray-Bans came out. Right now what we’re looking at is basically a cell phone recording camera on your face. So the way it exists right now, the form factor is different, but the underlying policy concerns are very similar to things like body-worn cameras or cell phone cameras, or any kind of recording device. And we do have laws in this country about one or two party consent when recording other people. We have laws that protect against things like stalking. But we do need to start thinking about whether those laws will be enough when we start thinking about things like ‘what if you don’t have to bring that video home and process it on your server’? What if you can do it right there on device? That starts raising a lot of questions about whether those laws that we have right now will be enough to protect against malicious misuse. That’s one of my biggest concerns is people who are not using these devices the right way, because obviously there’s a lot of very exciting ways you can do them the right way that’s going to make society better. But a huge role for policy is to think about that malicious misuse and what we can do to protect against it. We need to look a few steps forward beyond just the camera on your face aspect to really think about that.

[05:22] Ian Hamilton: I was one of the first people to get an iPhone and I remember feeling like I had this super power back when it came out of having Google Maps in my hand and able to navigate me and my friends around a new city in real time that we didn’t know actually where we were going. And prior to that, there was just no way to pull up directions everywhere you go. And it was a magical feeling. And I was able to have this camera around with me at all times, we’re able to take wonderful photos remembering the occasion. But that’s a completely different early use cases of what cell phones were good for compared to where we are today where they are sometimes a recording device of last resort when you have an altercation in public, like you pull out the phone as quickly as possible in some instances to document the absurdity of someone else’s behavior in a particular setting. And I think about that evolution that took maybe 10 years or 15 years to actually happen. We’re kind of at the beginning of that journey when it comes to glasses or body worn cameras. And you talk about consent for two people interacting there’s plenty of videos out there on the internet, some of the most popular internet videos, are situations where only one party gave consent for the recording of the situation.

[06:45] Ellysse Dick: The two party and one party consent laws differ by state and have largely to do with recording of conversation, not necessarily image capture so that is a major difference there. But you talk about the cell phone we can go all the way back to the first Kodak camera if we want to talk about the evolution of how we capture moments in time. So I think that one of the things that I’ve talked about a lot is distinguishing this evolution from how we perceive and interact with the world, from the actual risks of harm from a new technology that might be different from older ones. A lot of the things that people were worried about with drones or with cell phone cameras or with the original handheld camera do apply to AR glasses. And then we can learn from that. And then we need to look forward and figure out what’s different besides just the form factor to figure out what other law and policy we need for it. I think your point about evolving how we are using cameras is a good one but I don’t necessarily think that policy is the place to talk about whether or not you should be able to take a video of someone else’s actions in public and upload it to the internet. Because the way that we perceive the world now, we just are capturing so much of it. I do think consent is very important and there are situations where there should be more consent mechanisms in place, or at least ways that people can opt out of recording. Especially if we’re talking about more advanced AR glasses that are maybe not just video but could possibly capture biometric information about them or spatial information about them. So we need to think of ways that we could use technologies, like maybe geo-fencing or other sort of opt in opt out options to allow people to still own their own private space, private businesses, places of worship, public bathrooms, that kind of thing.

[08:27] Ian Hamilton: When I first started reporting on VR I recognized it as likely a future computing platform that would change things quite a bit. I just think we’re in for so much future shock as this technology goes out in future numbers. The thing that I’m noticing change this month and last month, is you’ve got the Space Pirate Trainer arena mode that’s on Oculus Quest and it’s driving people out into public with their Oculus VR headsets to find space to play this game. And then you’ve got the Ray-Bans going out in the real world exactly the same way, where people are just adopting this and taking them out into the real world and they’re changing behaviors left and right. I expect the behaviors to change ahead of policy and even social norms that have to catch up to the way people end up wanting to use these devices. I’ve seen two of these examples, one was a research paper that converted every car on the street into scifi type vehicles and then in real time erased people from off of the street. And then there was another technology that was kind of similar turned the faces of every passer-by going up the escalator into a smile. So all these frowns instantly turned into smiles from these people. Those are going to be significant changes as soon as the glasses can augment everyday interactions. Let’s go to age 13 restriction, are the policies around children’s use strong enough to be ready for this type of change that’s coming?

[10:03] Ellysse Dick: No, I don’t think so. And I think one of the issues with the way that we approach, especially privacy right now, especially in this country, is people will tend to come up with a laundry list of the types of information you can and cannot collect. So in the case of children, this is COPPA, which lists the kind of information that you need express consent to collect and the parental permission. The problem is if you just keep adding to that laundry list, you’re never going to keep up with the kinds of technologies that are out there and you might inadvertently restrict use of the technology. So for example, biometric data, especially if we were to extend the age for COPPA to an older age that might be a little bit more appropriate to use head-worn devices. If they’re using motion capture, what does that mean in terms of the ability for younger children or even preteens to use this tech perhaps in an educational context, or in something that might be very valuable to them even beyond just entertainment. So I do think we need to rethink how we approach children’s privacy, especially. Obviously it should be a priority that children are safe on these platforms, both physically, mentally, emotionally, but I think saying specifically you can and can’t gather this type of data is not the way to move forward with that. We need a much more holistic approach.

[11:16] Ian Hamilton: What do you think people need going forward in order to feel more comfortable with these devices?

[11:22] Ellysse Dick: I’m going to say we need national privacy legislation, first and foremost. It is impossible for companies to have a baseline to build their privacy policies and practices off of, if there are laws in some states, no laws in other states and no national baseline, especially when so many of them are starting out in the United States. So a comprehensive national privacy legislation is an absolute must for all of these things you’re talking about. From there, I think that companies do need to think about notice and consent a lot differently when we’re talking about immersive spaces, especially because it’s a new technology. A lot of people aren’t going to actually understand what it means to be collecting, say, eye tracking data or motion tracking data. So we need to find a way to help people understand what can be done with that information. And we also need to make sure that in the event that information is compromised or misused that, again, on the policy side, we have laws to address that. One of the things I think of a lot is how non-consensual pornography could translate into these spaces. What happens if someone breaches someone’s data that is sensitive in an immersive space, you need to have laws to address that. We need to have laws to address the privacy harms. And then we also need to make sure that companies are at the forefront of this to make sure that those harms aren’t happening at the first place. We need to make sure that users are informed and actually have an understanding of what data is being collected and what that could mean for them.

[12:44] Ian Hamilton: I’m thinking of when you’re in an online space in Facebook, if you’re in Horizon Worlds, I believe there’s a rolling recorder recording your last few, I dunno if it’s a few seconds or a few minutes, of your interactions online and that sort of rolling body camera, so to speak, on everyone who is in that online space, can be used to report any action any time. And if there’s behavior in that, rolling whatever length of time video, that gets turned into Facebook, you could get banned or have your account revoked theoretically, is that what we’re in for walking around with AR glasses as sort of the de facto standard for regulation of behavior in public? Obviously laws, certainly, keep certain people in line in certain situations. Is everyone wearing a one minute rolling camera going to do the same thing in the future?

[13:41] Ellysse Dick: I think you bring up a great point, especially with the Horizon recordings, there’s going to be a huge trade-off here between privacy and safety and free speech. These three things – you’re not going to be able to have a hundred percent at any time. To have safety we might have to give up some privacy, have some form of monitoring available. We have that already on social media, right? There’s the ability for systems to detect what you’re uploading before you even put it on the platform to make sure there’s no egregious content in there. We can talk about how effective that system is, but it is there, there is monitoring involved in the way that we communicate online right now. But when we’re talking about real time communication that includes gestures, that’s really changing the game. That makes it a lot harder to do it the way you would do on a 2D platform. So there is absolutely a question of how much trade off are we willing to do if we’re talking about a fully immersive space. When we’re talking about AR I think it’s sort of the same thing, perhaps if you have an application that people are drawing in real time on physical spaces and that can be easily erased, do you keep track of that? Do you keep track of their activity? Their virtual activity in physical space? Well, you might want to, if you want to make sure that you can go back and prove that they did something inappropriate, so you can ban them or remove them. But we’re still talking about real-time activity and free speech, so that’s a question I don’t have a full answer for, but I think is one that we really need to look at both on the policy side, but also individual platforms developing these technologies really need to take a step back and think about where they want to make those trade-offs because it will have a huge impact on how we interact with each other and the world going forward.

[15:16] Ian Hamilton: What policies are in Facebook’s interest to change? And are those the same policies that everyone who is using this technology should see changed?

[15:31] Ellysse Dick: As more people are coming in we’re going to have to change the way that we approach things like community guidelines and user education. So many, especially VR spaces, have really been built on these small communities of enthusiasts who have sort of established their own norms that make sense in a small group, but that’s not going to scale. So there needs to be a bit more of a concerted effort to build in community guidelines and make sure people understand how those work just from a person to person interaction way. Also personal safety guidelines, people really need to understand how to not run into walls, how to not trip over tables and understand that they are responsible for that for themselves. People really don’t understand how easy it is to fling your hand into your own desk when you’re sitting at it in VR, I’ve done it plenty of times and I use this on a regular basis. So I think that user education portion is really important. And that’s why user policies and the user controls is something that companies like Facebook and other companies building these platforms really need to think about and try to preempt as much as possible by allowing users to shape the experience in a way that makes sense for them, whether that’s making sure that they can use the experience sitting down, if they have mobility challenges or a small space or setting perimeters around themselves if they’re not comfortable being close to people, the ability to mute other people. The nice thing about having fully virtual space, is you can make it fully customizable to the individual. And obviously a fully customizable experience is a lot to ask, but making sure that users have the ability to shape it in a way that feels safe and secure and enjoyable for them is really important. And I think that companies need to look at ways to bake that into their user guidelines and to their user policies.

[17:07] Ian Hamilton: Let me ask a sort of tricky question here. Are elected officials in the United States prepared to make these changes that more tech savvy people understand deeply? A person like yourself, understanding this technology and the policy deeply it’s not the same as the actual, 70 or 80 year old person who has been in office for 20 to 30 years or whatever their length of time, how do we actually effect change through our elected officials? And are there traits in elections that people should look for among their candidates to push this all on the right direction?

[17:44] Ellysse Dick: Before we even get to elected officials, self-regulation, self-governance in industry is going to be critical here for exactly what you said. There’s not a strong understanding of it within certain policy circles, and building the standards within industry so everyone can sort of agree on the best practices is a really important first step. Whether people follow those best practices obviously is a different question, but at least establishing what should we be doing? Policy is not going to do that fast enough. So self-governance is really important. As far as actual elected members of the government, we do want more people who understand how technology works, but we also want people who have a strong understanding of the potential harms and the ability to differentiate that thing I was talking about earlier from, the privacy panic and the scifi dystopian visions of the future and actual things that could happen that policy could prevent, things like privacy legislation, online safety legislation, child safety, that kind of thing. A policymaker doesn’t have to know the inner workings of a specific technology if they can understand the potential three, four steps out uses of that technology and think backward from there. Everyone in Congress should put on a headset because I think it would just help everyone understand better what we’re talking about here, because it’s really hard to describe to someone who’s never used it before.

[19:01] Ian Hamilton: Thinking about this governor in the state next door to me who apparently got pretty upset at a journalist viewing source for a state website. Thinking about that misunderstanding of how technology works compared to where we are with these headsets and how they function. People of Congress, if you’re an elected official, you need to try these headsets on and really understand exactly how they function and the wide range of how they function and what they actually do to understand how they change society. I’ve been wearing the Ray-Ban sunglasses out in like semi-public spaces, I’m thinking about the baseball fields with my kid going out to the baseball field to film my particular child is a little different from wearing dark sunglasses and filming random kids on other teams. If another parent sees my sunglasses with a little light on them from the other side of the field, I would totally understand them being alarmed. Who is that person? Why are they filming? That’s a completely different action than holding up your camera and very obviously telegraphing to everyone else out there on the field I’m filming in this particular direction and my kid is in that direction. And I just wonder how elected officials- whether they’re actually out there using technology the same way everyone else does. That’s why I’m inviting it into my life this way to really understand how it changes those social norms.

[20:26] Ellysse Dick: There’s going to be growing pains. The way we’re going to figure out what people think is too creepy and what is within limits is probably not going to be a nice little pros and cons list that we all follow. People are going to be freaked out. And in many cases, justifiably so, especially if their children are involved or their private lives are involved. So I think that’s going to help shape that social norm side of things. Once we get past that social norms side of things, we can see where there are policy gaps. The more policy majors can be involved in that first round, actually using the technology, experiencing it, understanding what the limitations actually are, and where they might want to add more guardrails, we can get to that coexistence of policy and social norms much faster than we would if we’re over here using the technology and figuring out if it’s creepy or not, and then 10 years down the line we go to Congress and say, ‘okay, we figured out what you need to legislate about.’ If we’re all involved in this process together, and industry is working with Congress and vice versa to really help them understand the technology, I think we can prevent some of the most egregious misuse of the technology.

[21:31] Ian Hamilton: It’s tough that sci-fi ends up being our only reference point for so much of this use. Almost every sci-fi story is a dystopia. I’ve been reading Rainbow’s End quite recently for this picture of a world that isn’t quite so dystopian necessarily, that actually shows you how a world functions when you can have four people in a given location and one of those people is a rabbit and a completely different scale than the rest. Those are the types of things that we are in for as far as how people interact and, the rabbit, their identity could be masked in this interaction with three people feeling like they’re in the same spot together. It’s a bummer that we’ve got sci-fi as this only thing to measure against. This interview is happening right before Facebook announces whatever it’s rebrand is. And I hate to give so much weight to whatever they decide. But I’ve noticed in some of Mark Zuckerberg’s interviews with various people out there him talking about governance of the overall organization and how that might change in the future. And I would not be surprised to see an effort to give community more than just a token say in what happens on that platform. This is a long-winded way of asking, do you think our social networks become a layer of self-governance that happens before, or even replaces, our traditional institutions?

[23:02] Ellysse Dick: You can sort of say that’s happening already on 2D platforms. People interact with the world so much through social media, that social media has a role in how people perceive the world. And the way that people use social media helps them understand how to shape their algorithms, their platforms. Obviously when we’re putting this into 3D space, again, it really extrapolates that into making it like a second real world and I do think that users and people who are interacting within virtual spaces have a role to play in this governance. And I do think that really focusing on first user education so that people coming into the experience know what’s going on and how to use it. And then really focusing on that user feedback and on that social feedback, paying attention to those growing pains I was talking about and really taking that into consideration. I agree, I really don’t like that scifi is our only analogy sometimes. I don’t want everyone to think that we’re heading toward this dystopic future that every scifi novel that has to do with VR talks about, cause it doesn’t have to be that way. But if it’s not going to be that way, we have to bring in everyone into the conversation. Industry has to have a role. Government has to have a role. Researchers like myself and academic institutions need to have a role, as well as the great civil society advocates who are out there really making sure that they’re raising the important questions. It has to be a really comprehensive effort and if it’s done right, then we can make really innovative, new ways to interact with the world. But absolutely it has to be a whole of society effort.

[24:34] Ian Hamilton: How do you think de-platforming works in this future? Because it feels like there is a fundamental disconnect amongst certain members of society in just how free speech works on a given company’s platform. So you get de-platformed by a given organization, you frequently hear the word censorship thrown around to describe what just happened with them getting de-platformed, how does that work when your speech is out there in the world and you’re not behind a keyboard in a house or touching a touchscreen, you’re kind of walking around everywhere with a soap box, ready to jump on it at any time, and a company can take away that soapbox from you if what you say on that soap box violates their private policies. So how does de-platforming work in this future?

[25:30] Ellysse Dick: Free speech is going to be one of the biggest questions that we have as more and more people are using immersive experiences as their primary form of digital communication. Because, like you said, it’s not the same as typing something on a screen and having a post taken down. It’s actually, ‘I feel that I am speaking to you right now in this virtual space. And de-platforming me or removing me from the space really feels a lot more hands-on, I guess, than flagging a removing a post that I put on Facebook or Instagram or Twitter. So I think companies really need to, especially, now that they’re being asked already to question how they think about de-platforming and content moderation, they need to really think forward, especially companies that are building these virtual experiences that might have some social media experience like Facebook, they really need to think about what free speech looks like in a virtual environment and what the consequences of de-platforming could be, because I think it will be different than de-platforming on social media. And what level of misuse or platform abuse, warrants that level of speech removal, because it is different than social media and I think just porting the standards from Facebook or Twitter into an immersive space is not the answer for this at all.

[26:44] Ian Hamilton: Hmmmm well thank you so much for the time that’s a lot to think about, hopefully we have you on again cause this is an ongoing conversation all the time. Thank you so much for the time.

[26:53] Ellysse Dick: Absolutely. This was great. Thanks for having me.