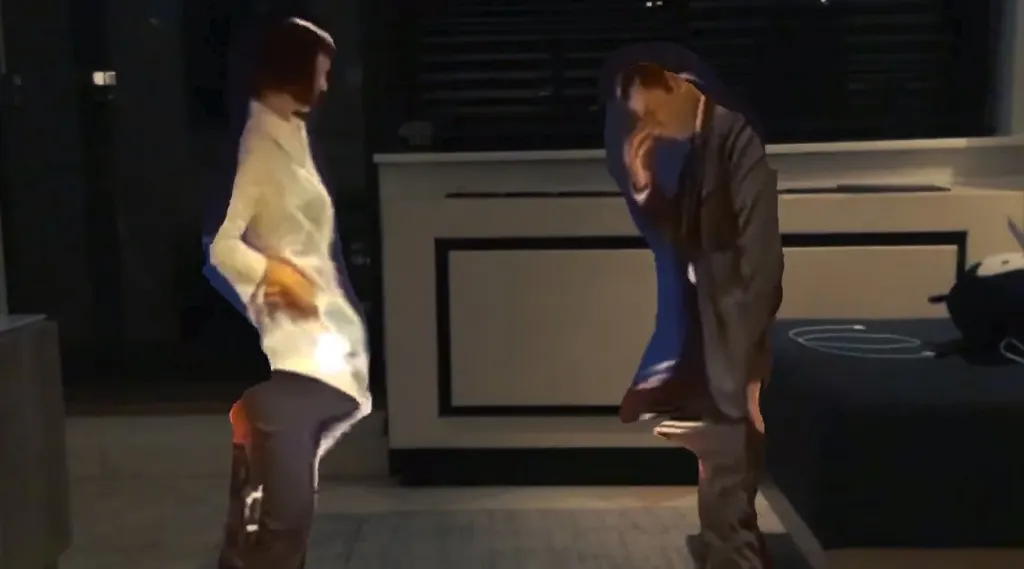

Ever wanted to dance alongside John Travolta and Uma Thurman in that scene from Pulp Fiction? This AR app could one day bring it right to you.

Volume is a new experience made by Or Fleisher and Shirin Anlen that brings recognizes 2D images in movies and projects them into a 3D space. In the case of Pulp Fiction, for example, it’s able to cut away the scene around the actors and project just them dancing in the user’s living room. Take a look below but, take note, it’s in its very early stages right now.

Sure, the process is glitchy, but imagine what this could look like when you’re able to accurately bring Darth Vader, Gollum or Indiana Jones right into your living room.

“Our experiment with Pulp Fiction allows users to step inside one the film’s scenes in Augmented Reality, using Apple’s ARKit framework on an iPad,” Fleisher explained to UploadVR. “This experiment is one of a few we are conducting at the moment, which illustrates the power of being able to reconstruct 3D scenes from 2D images. The possibilities of being able to reconstruct archival and static footage into 3D environments are one of the main motivations behind the development of the tool used to create these experiments called Volume.”

The system uses machine learning for depth prediction results from a single image. It uses a convolutional neural network to filter the image against their depth corresponding value.

Right now, Volume is being developed as an end-to-end web app and will later support AR and VR platforms. “Our entire machine learning infrastructure is based on Google’s AI tool Tensorflow” Fleisher said. “We are implementing extraneous considerations into the hardwired and complex field of machine learning. Our approach is motivated more by cinematic and visual storytelling than the mathematical logic of reconstructing 3D worlds. We feel that this approach will benefit this tool and lead to a new creative way of understanding the field’s challenges.”