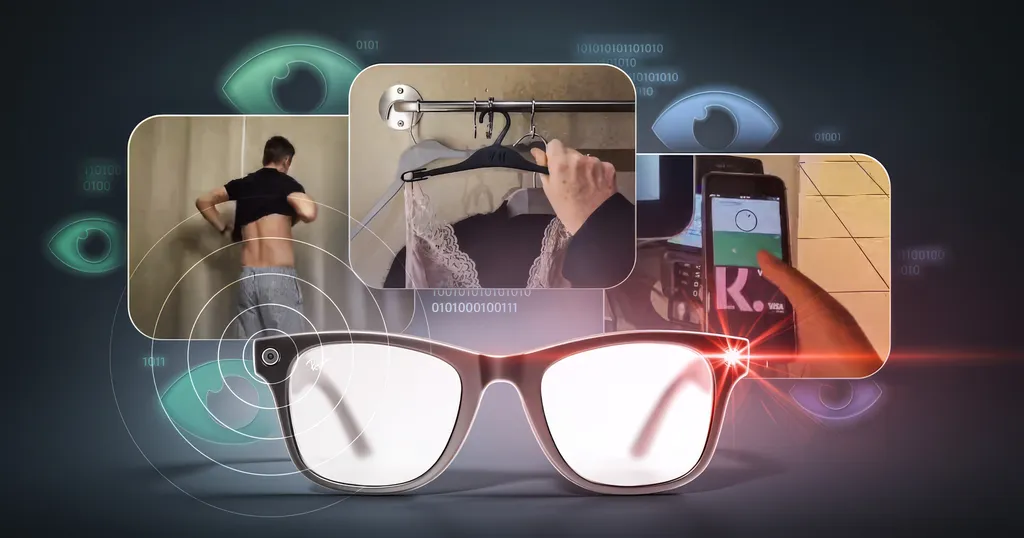

Subcontractors see intimate Meta AI visual queries from the company's smart glasses, sometimes accidentally triggered, a report from two Swedish newspapers revealed.

Svenska Dagbladet and Göteborgs-Posten's joint report has led to widespread worry about the privacy of smart glasses for not only others (which was already a concern), but the owners themselves.

To be clear, the issue here is not with the general photos and videos capture feature of the Ray-Ban and Oakley smart glasses. Photos and videos you capture with the glasses sync to your phone and are not viewed by Meta or subcontractors, nor are they used to train AI models.

Instead, the report refers to the visual query functionality of Meta AI on the devices, and its propensity for accidental activation.

The Meta AI visual queries feature started rolling out in early 2024, around six months after the Ray-Ban Meta glasses launched, and originally explicitly required saying "Hey Meta, look and tell me <query>", upon which the AI captures a frame to provide a response.

A portion of these responses are sent to outsourced contractors, in countries where labor is cheap such as Kenya, who rate the response based on whether it's useful or accurate. Over time, Meta uses this human review data to improve the quality of Meta AI responses.

Late in 2024, as announced at Meta Connect that year, Meta AI was updated to be able to more naturally infer from the context of your query whether it required a camera capture. For example "Hey Meta, what kind of plant is this?" or "Hey Meta, translate this menu" would trigger it.

This update made Meta AI more natural and useful. But it also had the side effect of making it far more likely for the AI to capture a frame when you do not intend it to, following the device incorrectly thinking it heard you say "Hey Meta". Further, with the Live AI feature available in the US and Canada, this can even include video clips. The Live AI feature lets you start a continuous conversation with the AI, similar to Google's Gemini Live on smartphones.

Combined with the contractor review system, this creates the nightmare scenario the report uncovered, wherein human beings can see what are essentially accidentally-captured intimate photos from inside the homes of smart glasses owners.

The Kenyan data reviewers who spoke to the Swedish newspapers reported seeing images and video clips of naked people going to the bathroom, changing clothes, and having sex, as well as watching porn and holding up sensitive documents and bank cards.

The facility these data reviewers work at does include strict security practices that prevent them from bringing any recording devices to work, or otherwise exfiltrating these clips. But if a data breach were to somehow occur it would trigger an “enormous scandal”, the report suggests.

In response to the Swedish report, Meta issued a general statement saying that visual data is "first filtered to protect people's privacy", including blurring faces and license plates. But the Kenyan workers claim this filter is not perfect, and that other intimate details still remain in imagery they review.

The practice of having subcontractors review AI interaction is not unique to Meta. Amazon does this for Alexa, Google for Gemini, and Apple for Siri, for example. And in 2019 a report from Bloomberg revealed how these subcontractors heard intimate bedside conversations from Amazon Echo devices, while another report from The Guardian revealed that the same was happening with Apple's Siri.

Following the backlash from The Guardian's report, Apple made human review of Siri conversations an opt-in system, while Google allows an opt-out for Gemini.

For Meta AI and Amazon Alexa, however, there is no ability to opt out. And for Meta, the smart glasses form factor, with an egocentric camera, presents unique privacy concerns for this model that could prompt those aware of the implications to never want to purchase smart glasses, or to stop using a pair they already own.

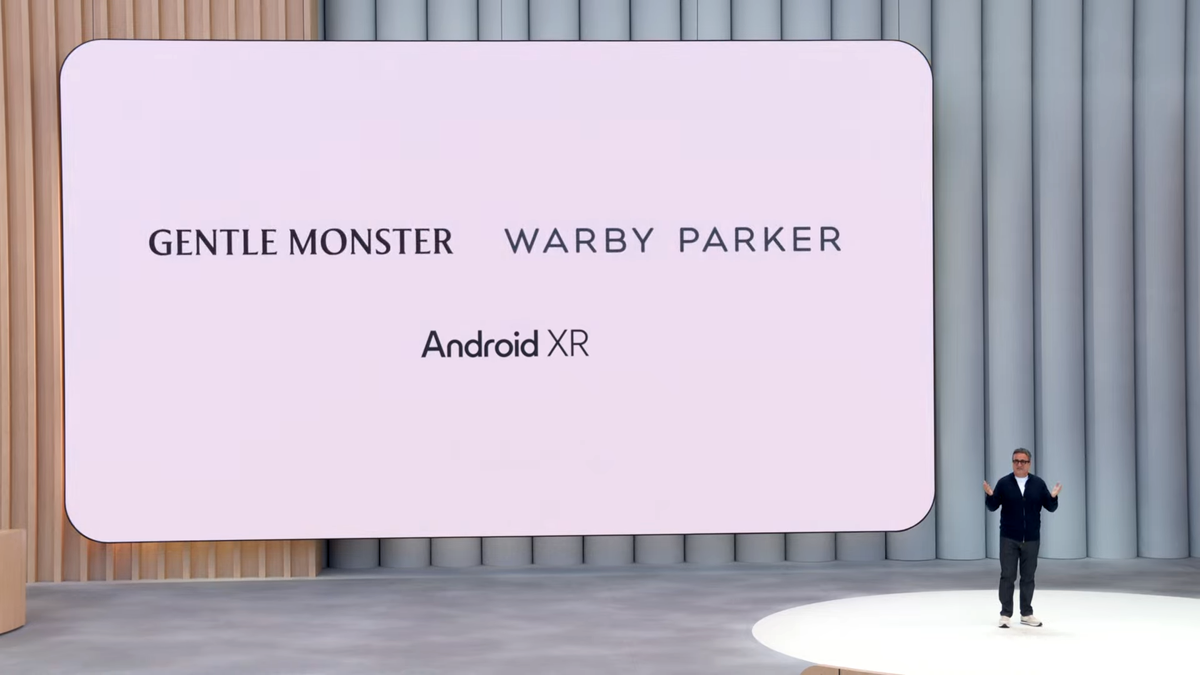

Will this report prompt Meta to change its data review practices, as Apple did following The Guardian's 2019 report? Or will it be ignored so that the company can improve Meta AI faster to catch up to stronger AI models like Google's Gemini 3? And how will Google and Amazon handle this issue as they launch consumer smart glasses in coming months?