Meta says its “goal” is to deliver automated room scanning for mixed reality.

Five months before Quest Pro’s release, Mark Zuckerberg said it would feature an IR projector for active depth sensing. Devices with depth sensors like HoloLens 2, iPhone Pro, and iPad Pro can scan your room automatically, generating a mesh of the walls and the furniture, so that virtual objects can appear behind real objects and collide with them.

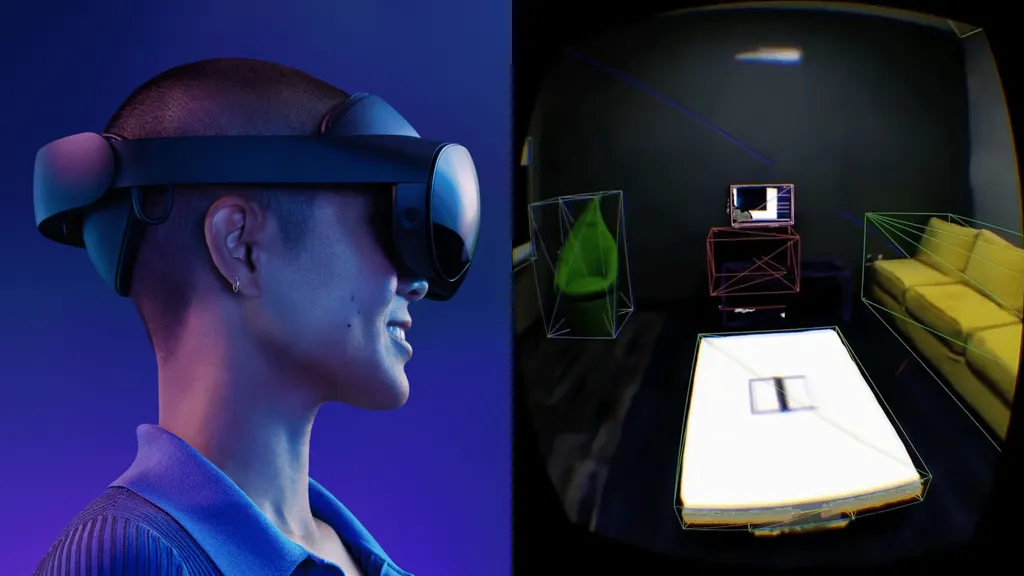

In 2018 during the Oculus Connect 5 conference, Facebook showed off research towards semantic room scanning, and the device shown appeared to have a depth sensor:

But the depth sensor was dropped from Quest Pro in the months before it launched. Today on Quest headsets, you have to manually mark out your walls and furniture for virtual objects to collide with physical objects. This is an arduous process with imperfect results, adding significant friction, so very few apps on the Quest Store support this kind of full-fledged mixed reality.

In a blog post published today wherein Meta brands its color passthrough tech stack ‘Meta Reality’, the company said “In the future, our goal is to deliver an automated version of Scene Capture that doesn’t require people to manually capture their surroundings.“

Achieving this without hardware-level depth sensing has already been demonstrated in research papers, and by some smartphone apps, via state-of-the-art computer vision machine learning techniques. But the systems in those papers run on powerful desktop GPUs, and those smartphone apps use the entire power of the mobile chip, leaving nothing remaining for rendering or other tasks. Shipping a reliable and performant room scanning system on a headset powered by what is effectively a modified two year old phone chip could prove extremely challenging.

Doing that with depth sensors would add cost, so if Meta can achieve this in software, it could enable significantly more affordable full-fledged mixed reality headsets in future, such as the “around $300-500” Quest 3.