Computer graphics conference SIGGRAPH in Los Angeles featured a series of VR and AR-related projects premiering at the event.

The long-running conference serves as a yearly showcase of research, tools and art focused around the field of computer graphics. SIGGRAPH was held from July 28 to Aug. 1 at the Los Angeles Convention Center with an immersive pavilion featuring an arcade, museum and a village of mixed reality installations. There’s also exhibition space and presentation areas where emerging technologies are discussed and shared, as well as a VR Theater hosted as part of the event.

We saw A Kite’s Tale from long-time Disney visual effects artist and VR enthusiast Bruce Wright premiering in the theater alongside other new productions like Doctor Who: The Runaway. There’s more exploratory research at SIGGRAPH too and art-focused works from students and universities.

You can check out our 39-minute walk through the conference here:

Here are a few projects that caught our eye at SIGGRAPH 2019:

Prescription and Foveated AR

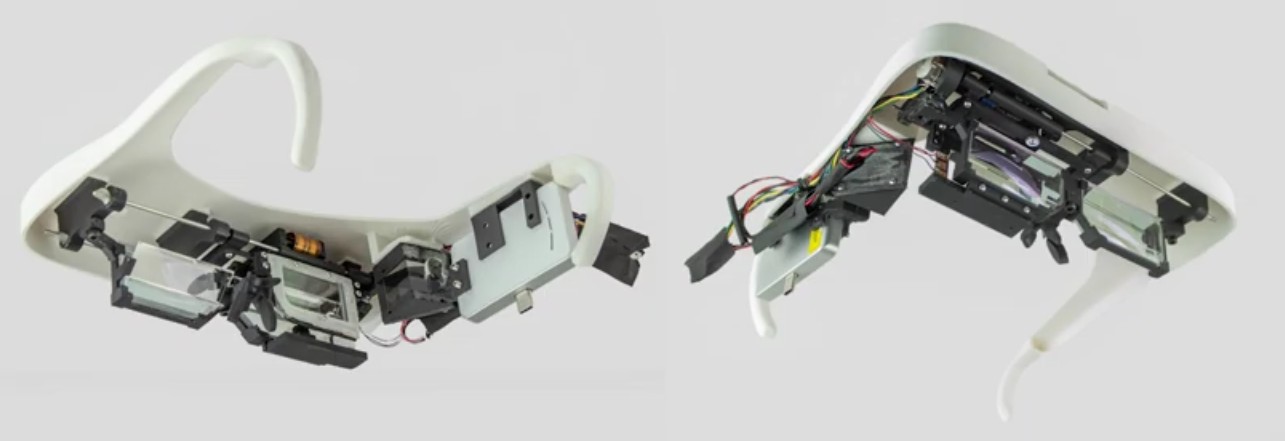

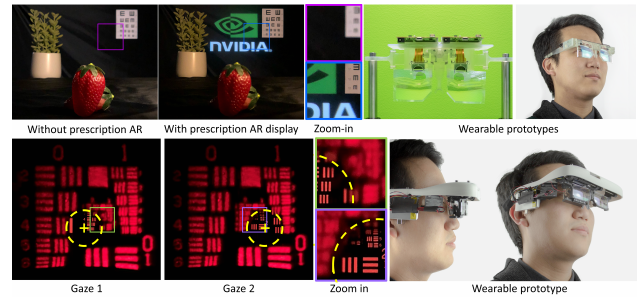

NVIDIA researchers presented two kinds of AR displays at SIGGRAPH 2019. One display accounts for the wearer’s prescription with its optics and the other moves elements in connection with gaze tracking.

NVIDIA researchers presented two kinds of AR displays at SIGGRAPH 2019. One display accounts for the wearer’s prescription with its optics and the other moves elements in connection with gaze tracking.

The Prescription AR system is “a 5mm-thick prescription-embedded AR display based on a free-form image combiner,” according to the abstract. “A plastic prescription lens corrects viewer’s vision while a half-mirror-coated free-form image combiner located delivers an augmented image located at the fixed focal depth (1 m).”

The Foveated AR system is “a near-eye AR display with resolution and focal depth dynamically driven by gaze tracking. The display combines a traveling microdisplay relayed off a concave half-mirror magnifier for the high-resolution foveal region, with a wide FOV peripheral display using a projector-based Maxwellian-view display whose nodal point is translated to follow the viewer’s pupil during eye movements using a traveling holographic optical element (HOE).”

The foveated system uses an “infrared camera” to track eye movement and drive the optics directly in front of the eyeball. “Our display supports accommodation cues by varying the focal depth of the microdisplay in the foveal region, and by rendering simulated defocus on the ‘always in focus’ scanning laser projector used for peripheral display.”

Anthropomorphic Tail

Arque is eye-catching work from the Embodied Media Project at Keio University’s Graduate School of Media Design in Japan which proposes “an artificial biomimicry-inspired anthropomorphic tail to allow us to alter our body momentum for assistive, and haptic feedback applications.”

The tail’s structure is “driven by four pneumatic artificial muscles providing the actuation mechanism for the tail tip” and, according to the abstract for the project submitted to SIGGRAPH’s emerging technologies, it highlights what such a prosthetic tail could do “as an extension of human body to provide active momentum alteration in balancing situations, or as a device to alter body momentum for full-body haptic feedback scenarios.”

Ollie VR Animation Tool

Ollie is a new VR animation tool designed for intuitive creation in VR that’ll be shown at SIGGRAPH.

We’ve seen tools like Quill, Tvori and Mindshow used for VR-based animations, but Ollie focuses on a notebook-like interface with “motion paths and keyframes visualized spatially, automatic easing, and automatic squash and stretch” meant to make it easier for first time animators to create something. The app’s creators tell me they should have the entire app running on Oculus Quest to show at SIGGRAPH.

LiquidMask

This project from Taipei Tech’s Department of Interaction Design pumps liquid into a VR headset to provide tactile sensations. “Filling liquid in the water pipe as a transmission interface, this system can simultaneously produce thermal changes and vibration responses on the face skin of the users,” according to the project’s description.

This project from Taipei Tech’s Department of Interaction Design pumps liquid into a VR headset to provide tactile sensations. “Filling liquid in the water pipe as a transmission interface, this system can simultaneously produce thermal changes and vibration responses on the face skin of the users,” according to the project’s description.

This post was originally published on July 16 and updated with videos from the conference on August 8.