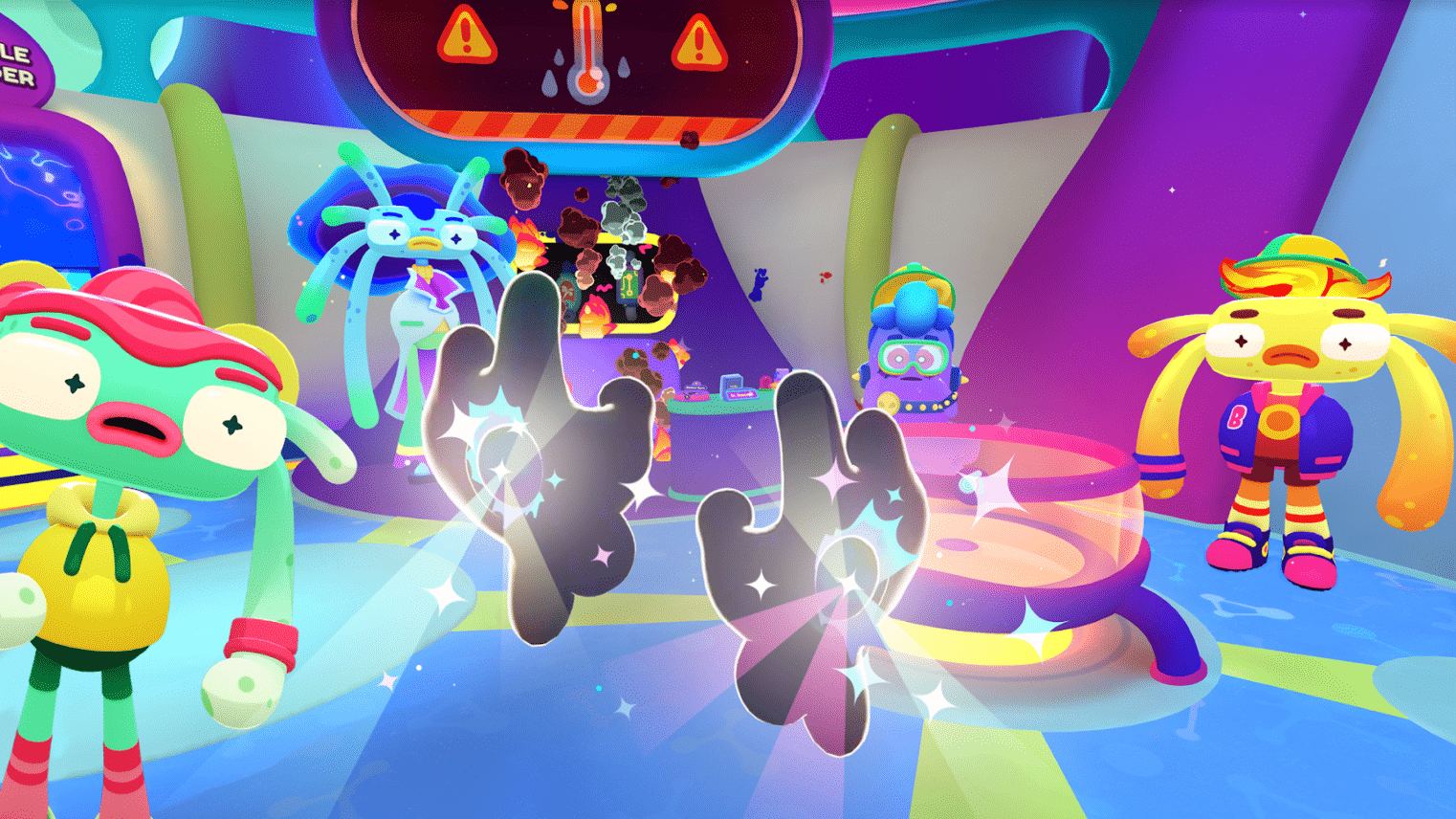

When Owlchemy Labs revealed its next game back at Gamescom in August, there wasn’t a whole lot to talk about.

The announcement was fairly non-descript, with a teaser trailer (embedded below) that revealed next to nothing and failed to show any gameplay. Instead, Owlchemy focused on two key facts: the game will be multiplayer and support hand tracking.

Both of those are new for Owlchemy, a studio that built a reputation on producing satisfying and native VR interaction systems that cater to all levels of players. A shining example of that is the studio’s most well-known title, Job Simulator. It’s user-friendly interaction system is likely one of the reasons it remains a best seller across several VR platforms today, despite its 2017 release date.

Around when Job Simulator released, the Owlchemy team was trying to crack the design code for an emerging technology: VR motion controllers. Five years later, the team finds itself pioneering another emerging form of VR input. “For us, a lot of this [work with hand tracking] feels very similar to the way we felt when we first got our hands on the Vive. We’re like ‘Alright, let’s figure this out, there’s some technical hurdles we have to overcome, but every version of it gets better.’ Today is the worst version of hand tracking you’re going to play. Tomorrow, or a few months from now, it will always be better than today.”

While the team is reticent to confirm hand tracking is the exclusive input method for its new title, the team certainly isn’t thinking much about controllers during development. “We are big on accessibility,” says Andrew Eiche, Owlchemy Labs’ Chief Operating Owl, sitting across from me at a table on the show floor earlier this year at Gamescom. “We are building the ground up for hand tracking and right now, our developers are developing exclusively in hand tracking. The game does not have controller stuff built into it. We’re not letting our developers use controllers [during development] because we need to solve the hard problems.”

Eiche believes that if Owlchemy can solve some of these ‘hard problems’, then hand tracking will progress further towards a true primary input method for VR. Despite many titles offering hand tracking on Quest – both as a requirement or as an exclusive mode of input – the technology still has limitations and there’s few standards and agreed-upon methods of implementation.

What’s most interesting about this new push from Owlchemy is that the studio is known for its precise interaction systems and yet, by all accounts, current hand tracking technology is usually defined by the complete opposite. While it might be more immersive in some settings, it’s certain less precise than motion controllers.

“We need to get a conversation moving on hand tracking, externally as much as it’s moving internally. We want to engage on that front and put hand tracking outside the box of novelty.” Eiche sees most existing implementations of hand tracking as a cool feature that live in the world of novelty. There are some exceptions – Eiche notes Cubism as a personal favorite – but it seems more like a feature you use when you forget to pick up your controllers. “We’re trying to say [that] it can be more than that. It can be your primary input method.”

“It’s tough to foresee an industry that has the adoption we all hope it has [while using motion controllers],” ponders Eiche. “How do you get a billion headsets out there? You can’t hand someone a snapped-in-half Xbox or PlayStation controller, say ‘We’re gonna put a blindfold on you now. Touch X.’ You need some natural interaction method.”

Motion controllers have proved a useful transitionary instrument in VR’s recent period of growth, but Eiche and the Owlchemy team see hand tracking as a way to drive growth even further. Eiche uses the natural interface of touch screens as a past example, driving mass adoption of cell phones in the late 2000s. “We had cell phones with keyboards for a long, long time and they filled a very specific niche. Keyboards haven’t gone away, in fact we can even hook a keyboard up to your cell phone right now, there’s nothing stopping you. But the thing that really got smartphones to switch from being PDAs with a cell connection to being truly adopted was figuring out that natural user interface [of touch screens].”

It could be that motion controllers are to the VR headset what the keyboard is to the smartphone. “I don’t think controllers are going away, but I think if you talk about how you get a billion people to play VR? It’s not controllers, you’re just not gonna win that fight.”

With past releases, Owlchemy reduces the friction of motion controllers by using diegetic VR design – this means that everything, be it a button, switch or menu, is a part of the immersive VR world that they create for the player, ignoring controller buttons wherever possible. “We try to put as much in the [VR] world, because the more you see and touch, the better off you are. Instead of ‘Press A’, there’s a big control panel and you hit the button.”

Moving to hand tracking is the natural next step, but it comes with its own difficulties, many of which are linked to the current limitations of the technology. “You always know when somebody has done a lot of hand tracking experiences when they do the sideways pinch. That’s like the number one [indicator] … because [they know] the camera has to see your fingers do the pinch, the pick up … How do we make throwing [with hand tracking] great so that people don’t think about it? So that you don’t do the weird hand tracking throw.”

There’s also an inherently different experience to interactions when using hand tracking or motion controllers. “It feels like the difference between Duplo and Lego. In the controller mode, you’ve got big mitts … and hand tracking mode, you’ve [got] this very fine control.”

“With your full hands, you’re able to do things with small objects that are more interesting, which wouldn’t be interesting with a big hand. Picking up a small cube or a coin or something with an oven mitt feels bad.” Previous Owlchemy titles make items larger than life – such as making a coin the size of sand dollar – so that it picking them up with controllers feels natural. “But if we put a small cube or sphere in there, because you have each individual finger [with hand tracking], that’s interesting. There’s a lot of interesting things you can do once you have a very accurate, discrete understanding of where your whole hand is and fingers are as you’re curling them in.”

“There’s a lot of cool states that exist between complete closed fits and complete open palm. Most things in life, when you pick them up, you don’t grip them with the absolute pinnacle of your grip strength. That creates an interesting interaction dynamic, where you can start thinking about that and what that means and how that plays out.”

While the specific details of what Owlchemy’s next game will entail remain fuzzy, it’s the team’s passion for pushing technology forward that comes through loud and clear. Hand tracking is the next step for Owlchemy – that much isn’t up for debate. “We see the excitement [for hand tracking] and we see the potential. If we can build all our stuff while it’s in this [preliminary] state, then when it hits, we’re not playing catch-up.”

“We think we’re right on the cusp here,” says Eiche, smiling. “It’s gonna be great.”