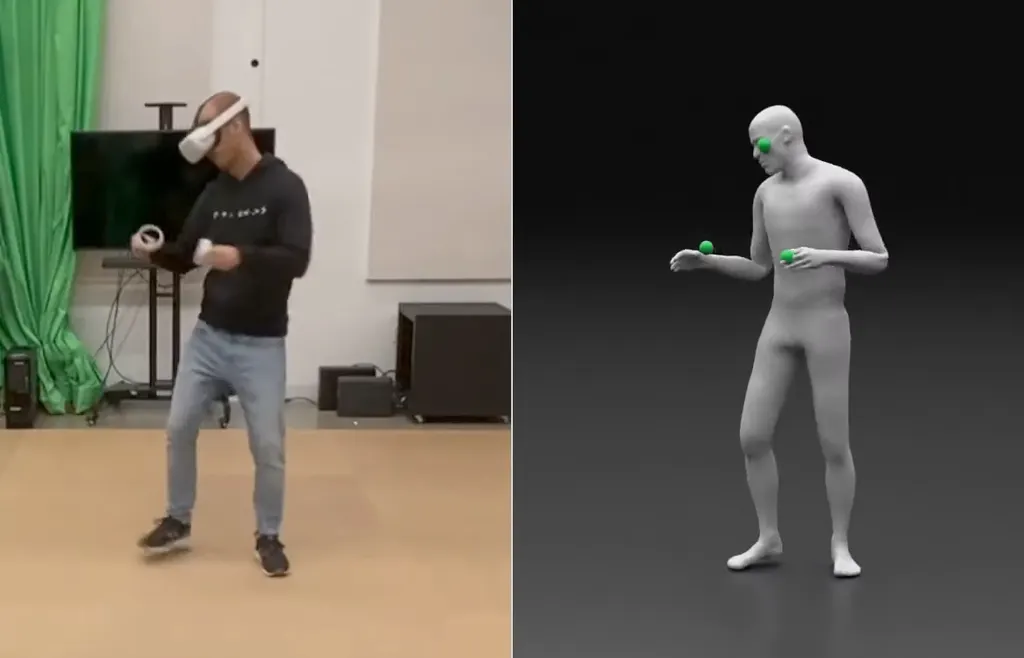

Meta researchers demonstrated Quest 2 body pose estimation without extra trackers.

Current VR systems ship with a headset and held controllers, so only track the position of your head and hands. The position of your elbows, torso, and legs can be estimated using a class of algorithms called inverse kinematics (IK), but this is only accurate sometimes for elbows and rarely correct for legs. There are just too many potential solutions for each given set of head and hand positions.

Given the limitations of IK, some VR apps today show only your hands, and many only give you an upper body. PC headsets using SteamVR tracking support worn extra trackers such as HTC’s Vive Tracker, but the three needed for body tracking cost north of $350 and thus this isn’t supported in most games.

But in a new paper titled QuestSim, Meta researchers demonstrated a system driven by a neural network that can estimate a plausible full body pose with just the tracking data from Quest 2 and its controllers. No extra trackers or external sensors are needed.

The resulting avatar motion matches the user’s real motion fairly closely. The researchers even claim the resulting accuracy and jitter are superior to worn IMU trackers – devices with only an accelerometer and gyroscope such as Pico 4’s announced Pico Fitness Band (Pico claims it is working on its own machine learning based solution though).

However, there is a catch here. As is seen in the video, this system is designed to produce a plausible full body pose, not match the exact position of your hands. The system’s latency is also 160ms – more than 11 frames at 72Hz. QuestSim would only be suitable for seeing other people’s avatar bodies, not seeing your own when looking down.

But still, seeing full body motion of other people’s avatars would be far preferable to the often criticized legless upper bodies of Meta’s current avatars. So is this system, or something like it, coming to Quest 2?

Meta CTO Andrew Bosworth certainly seemed to hint at this last week. When asked about leg tracking in an Instagram “ask me anything” Bosworth responded:

“Yeah we’ve been made fun of a lot for the legless avatars, and I think that’s very fair and I think it’s pretty funny.

Having legs on your own avatar that don’t match your real legs is very disconcerting to people. But of course we can put legs on other people, that you can see, and it doesn’t bother you at all.

So we are working on legs that look natural to somebody who is a bystander – because they don’t know how your real legs are actually positioned – but probably you when you look at your own legs will continue to see nothing. That’s our current strategy.”

The short term solution might not be at the same quality of this algorithm though. Machine learning research papers tend to run on powerful PC GPUs at relatively low framerate. The paper doesn’t mention the runtime performance of the system described.

The Meta Connect annual AR/VR event takes place in just over 2 weeks, so any body tracking announcement would likely take place during it.