Researchers from Google developed the first end-to-end 6DoF video system which can even stream over (high bandwidth) internet connections.

Current 360 videos can take you to exotic places and events, and you can look around, but you can’t actually move your head forward or backward positionally. This makes the entire world feel locked to your head, which really isn’t the same as being somewhere at all.

Google’s new system encapsulates the entire video stack; capture, reconstruction compression, and rendering- delivering a milestone result.

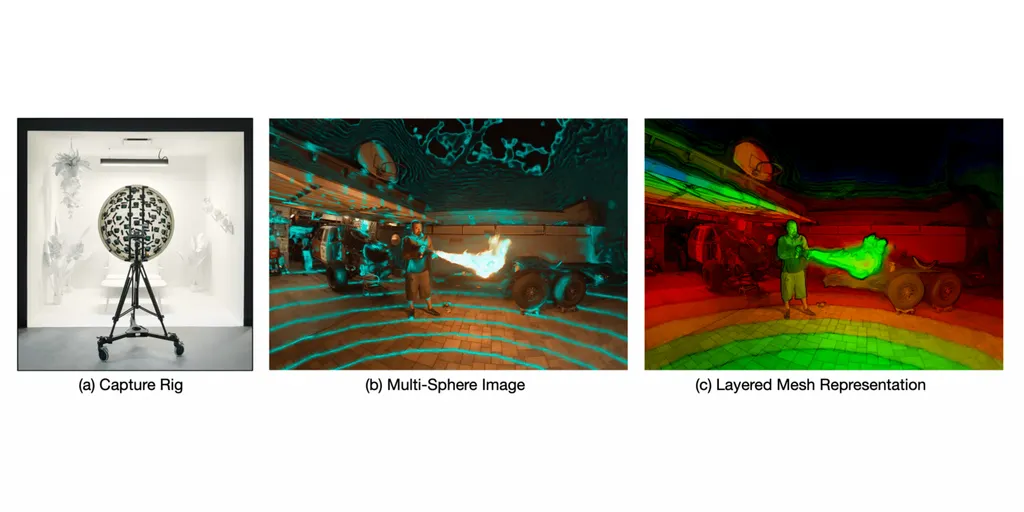

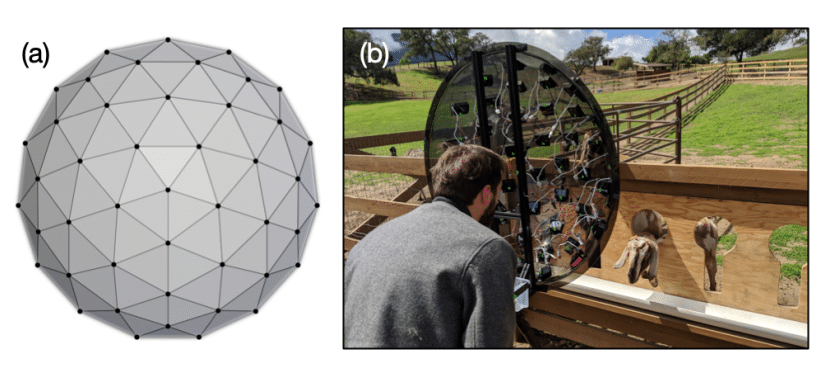

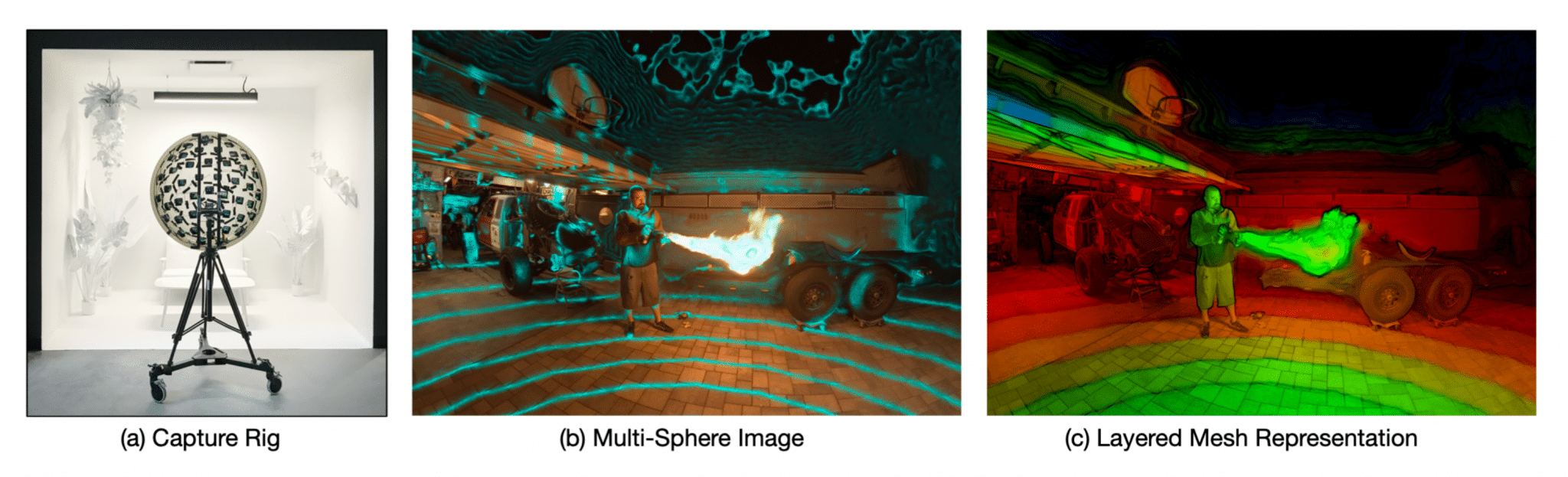

The camera rig features 46 synchronized 4K cameras running at 30 frames per second. Each camera is attached to a “low cost” acrylic dome. Since the acrylic is semi-transparent, it can even be used as a viewfinder.

Each camera used has a retail price of $160, which would total to just north of $7,000 for the rig. That may sound high, but it’s actually considerably lower cost than bespoke alternatives. 6DoF video is a new technology just starting to become viable.

The result is a 220 degree “lightfield” with a width of 70cm- that’s how much you can move your head. The resulting resolution is 10 pixels per degree, meaning it will probably look somewhat blurry on any modern headset with the exception of the original HTC Vive. As with all technology, that will improve over time.

But what’s really impressive is the compression and rendering. A light field video can be streamed over a reliable 300 Mbit/sec internet connection. That’s still well beyond average internet speeds, but most major cities now offer this kind of bandwidth.

How Does It Work?

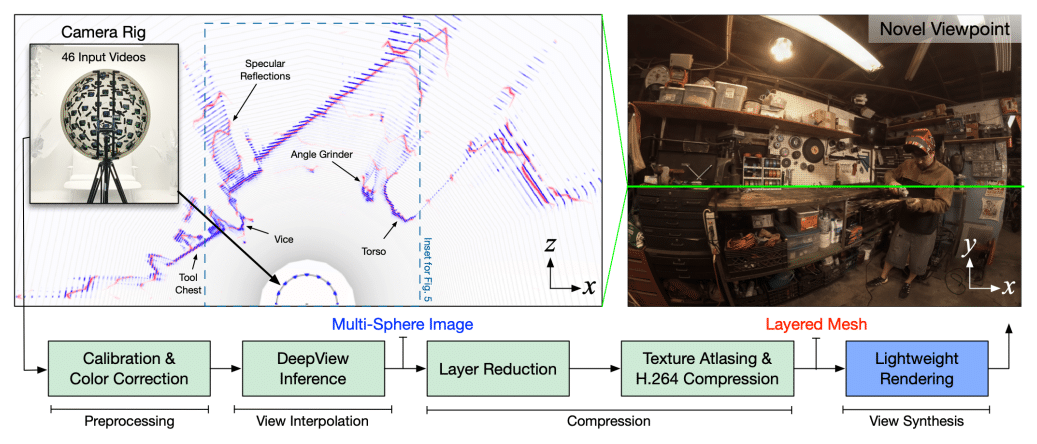

In 2019 Google’s AI researchers developed a machine learning algorithm called DeepView. With an input of 4 images of the same scene, from slightly different perspectives, DeepView can generate a depth map and even generate new images from arbitrary perspectives.

This new 6DoF video system uses a modified version of DeepView. Instead of representing the scene through 2D planes, the algorithm instead uses a collection of spherical shells. A new algorithm reprocesses this output down to a much smaller number of shells.

Finally, these spherical layers are transformed into a much lighter “layered mesh”, which sample from a texture atlas to further save on resources (this is a technique used in game engines, where textures for different models are stored in the same file, tightly packed together.)

You can read the research paper and try out some samples in your browser on Google’s public page for the project.

Light field video is still an emerging technology in the early stages, so don’t expect YouTube to start supporting light field videos in the near future. But it does looks clear that one of the holy grails of VR content, streamable 6DoF video, is now a solvable problem.

We’ll be keeping a close eye on this technology as it starts to transition from research to real world products.