Gleechi’s VirtualGrasp software development kit is now available through the company’s early access program, offering auto-generated and dynamic hand interactions and grasp positions for VR developers.

We first reported on Gleechi’s technology back in early 2020 when the company released footage of their VirtualGrasp SDK in action, which automates the programming of hand interactions in VR and allows for easy grasping and interaction with any 3D mesh object.

Two years on, the SDK is now available through Gleechi’s early access program, which you can apply for here. The SDK supports all major VR platforms, provided as a plug-in that can be integrated into existing applications, with support for Unity and Unreal, for both controller and hand tracking interactions.

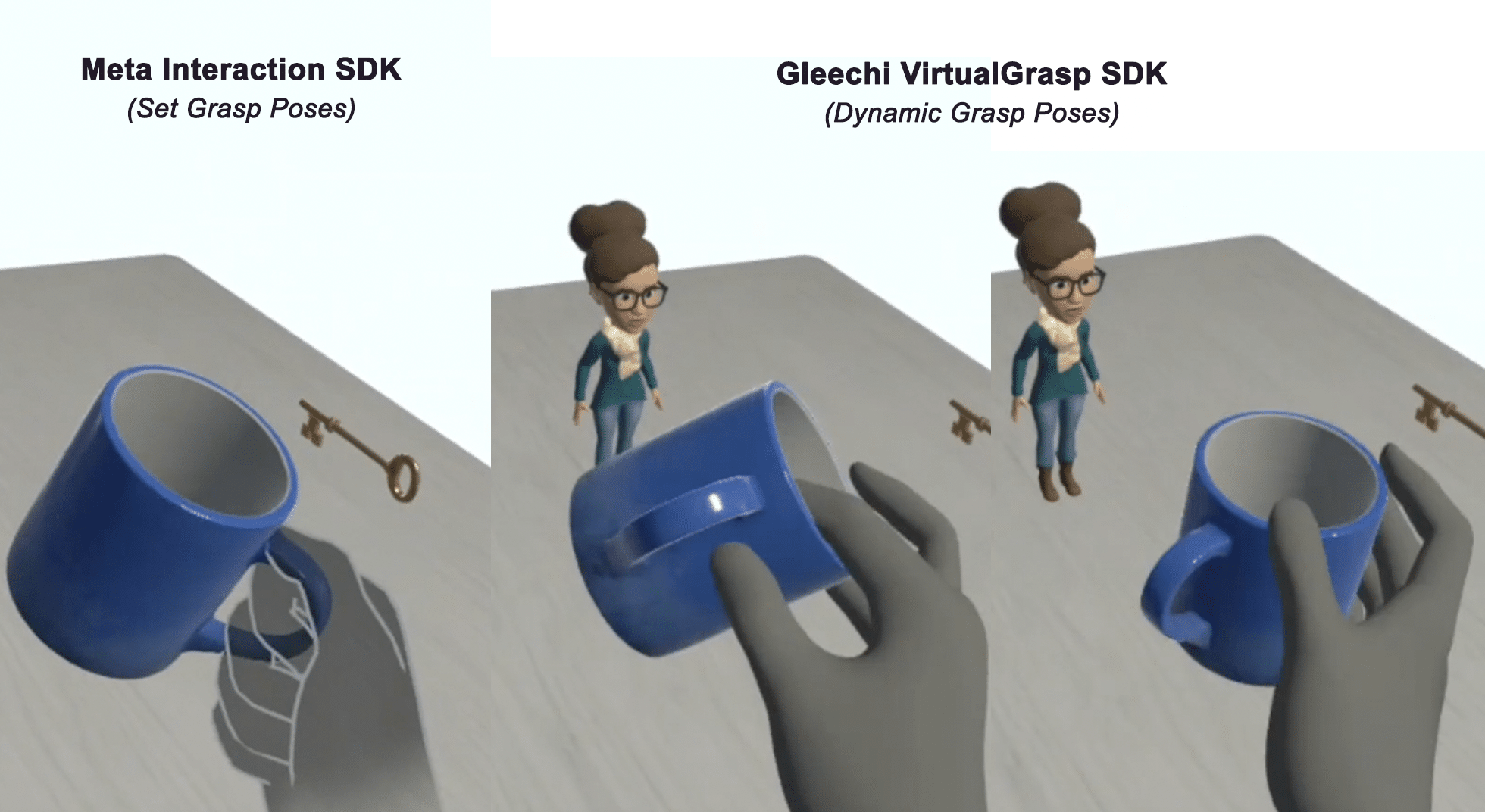

Given the timing of release, you might ask what the difference is between Meta’s new interaction SDK and Gleechi’s VirtualGrasp SDK. The key difference is that Meta’s technology uses set positions for grasps and interactions – if you pick up an object, it can snap to pre-determined grasp positions that are manually assigned by the developer.

On the other hand (pun intended), the Gleechi SDK is designed as a dynamic system that can generate natural poses and interactions between hands and objects automatically, using the 3D mesh of the objects. This means there should be much less manual input and assignment needed from the developer, and allows for much more immersive interactions that can appear more natural than pre-set positions.

You can see an example of how the two SDKs differ in the graphic above, created with screenshots taken from a demonstration video provided by Gleechi. On the left, the interaction uses the Meta SDK – the mug therefore uses set positions and grab poses that are set manually by the developers. In this case, it’s set so the user will always grab the mug by the handle. Multiple grab poses are possible with the Meta SDK, but each has to be manually set up by the developer.

In the middle and the right, you can see how Gleechi’s SDK allows the user to dynamically grab the mug from any angle or position. A natural grab pose is applied to the object depending on the position of the hand, without the developer having to set up the poses manually. It is done automatically by the SDK, using the 3D mesh of the object.

Gleechi also noted that its SDK supports manual grasp positions as well. Developers can use the dynamic grasp system to find a position they’d like to set as a static grasp and then lock it in. For example, a developer could use VirtualGrasp’s dynamic system to pick the mug up from the top, as pictured above, and then set that as the preferred position for the object. The mug will then always snap to that pose when picked up, as opposed to dynamically from any position. This allows you to set static hand grip poses for some objects, while still using the automatic dynamic poses for others.

We were able to try a demo application using the Gleechi SDK on a Meta Quest headset and can confirm that the dynamic poses and interactions work as described and shown above. We were able to approach several objects from any angle or position and the SDK would apply an appropriate grasp position that felt and looked much more natural than most other interactions with pre-set poses and grasp positions.

If you’re interested in learning more, you can check out the VirtualGrasp site or head over to the Gleechi documentation page to learn more about the SDK’s capabilities.