If the only controller-free hand tracking you’ve used is Quest 2, you may not have the best opinion of it.

Sure it’s cool to see your actual hands in VR for the first time. But try to navigate Quest’s menu system or grab objects in apps like Hand Physics Lab and you’ll soon realize the tracking quality leaves a lot to be desired.

At CES 2022 I tried Ultraleap’s fifth generation technology, which the company calls Gemini. If you’ve been following VR since before the release of consumer headsets you’ll be familiar with Leap Motion, a startup that launched a desktop hand tracking accessory in 2014 that could be mounted to the front of the Oculus Developer Kit 2. It brought hand tracking to VR before most users even had access to motion controllers.

Leap Motion had a small range of demo applications, and it was even supported in the social platform AltSpaceVR. But when the HTC Vive & Oculus Touch controllers launched in 2016 it quickly faded from relevance, despite a major algorithm quality update called ‘Orion’. By 2019 even AltSpace dropped support for Leap Motion – developers prioritize for hardware users actually own, and even though Leap Motion was relatively inexpensive (around $80) it still faced the classic chicken & egg problem of input accessories.

Leap Motion was acquired in 2019 by UK-based haptic technology firm UltraHaptics, merging to become UltraLeap. A year later, Facebook shipped a software update to Oculus Quest adding hand tracking. Quest’s hand tracking leverages the onboard grey fisheye cameras (which can also see in the infrared spectrum). It does work, and millions of people have used it, but if your hands are at the wrong angle or too close together it quickly breaks down. Trying Ultraleap showed me just how much better hand tracking can be.

VR-quality hand tracking requires sensor overlap – the algorithm compares the perspective of each camera to determine the relative position of each hand. Quest’s cameras have a wide field of view, but since they’re positioned at the edges of the headset pointing outwards the hand tracking area is relatively small. That’s why it only works properly with your hands directly in front of you, and why you can notice the system re-establishing tracking as you bring your hands back into your view.

Ultraleap’s latest Gemini hand tracking tech is the successor to Orion, and Ultraleap claims it was re-written from the ground up. It was announced in 2020 alongside a multi-year agreement with Qualcomm integrating and optimizing it for the Snapdragon XR2 chip. 95% of the algorithm runs on the XR2’s DSP (Digital Signal Processor), freeing up the CPU for the actual VR & AR applications.

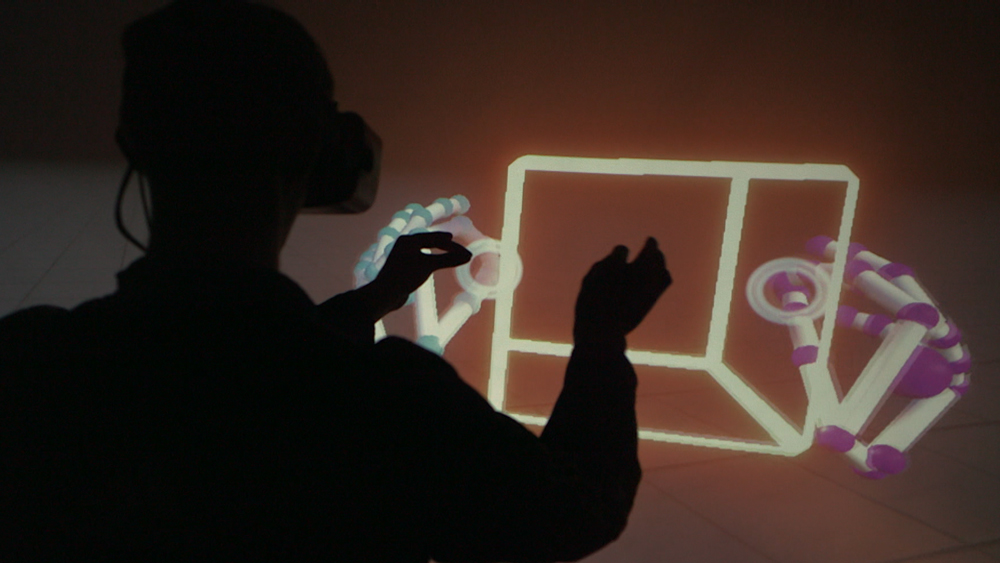

I tried Gemini as an attachment to Vive Focus 3 virtual reality headset and as an integrated aspect of the upcoming Lynx R1 mixed reality headset – both headsets use the XR2 chip. The two infrared fisheye cameras face forward, not to the sides, giving almost total overlap over a 170 degrees field of view. That’s wider than either headset’s lenses (and almost every headset in existence).

The result was that just like when using motion controllers, I could stretch my arms out naturally and no longer had to worry about going out of the tracking range.

The hardware, like Leap Motion before it, also has active infrared emitters to illuminate your hands for a better view. And further, the sensors are sampled at 90 frames per second. The combination of these specs and the Gemini algorithm meant the virtual hands seemed to match my own precisely and with no perceptible latency. Gone was the lag and glitching of Quest’s hand tracking – Gemini felt generations ahead. I could even interlock my fingers. Only by almost entirely occluding one hand did tracking start to fail.

And speaking of occlusion, one of the most impressive aspects of the demo was the re-acquisition time. I purposely occluded one of my hands by passing it under a table, but by the time it was visible again tracking had almost instantly resumed.

I have always believed controller-free hand tracking will play an important role in mainstream virtual reality. Some people object to this on the basis of the lack of haptics, a view I can’t really argue with. But others are skeptical on the grounds of a perceived lack of precision. Gemini proves that’s really just the tracking quality of Quest, not a fundamental limit of the technology.

Gemini will be pre-integrated in the Lynx R1 headset, which starts at around $500 for Kickstarter backers. Simply put, there has never been hand tracking of this quality at that price. I’m excited to see what developers will do with it, and for future devices leveraging this tech. Meta has some catching up to do.