Apple confirmed Vision Pro supports porting Unity apps and games.

Acknowledging the existing Unity VR development community, Apple said "we know there is a community of developers who have been building incredible 3D apps for years" and announced a "deep partnership" with Unity in order to "bring those apps to Vision Pro".

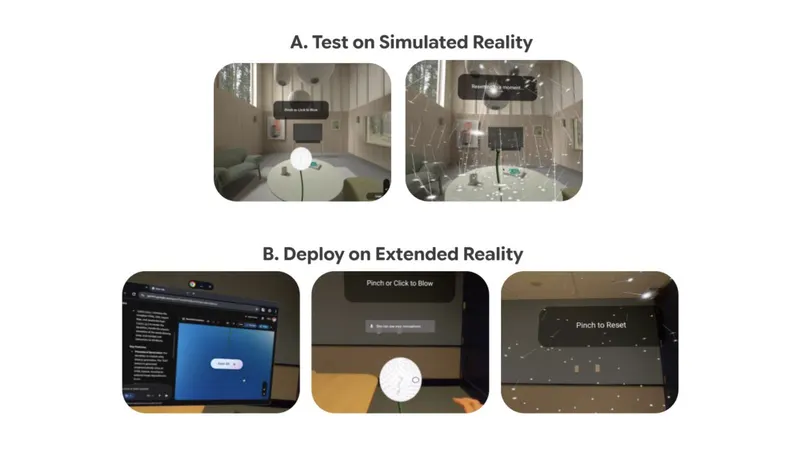

This partnership involved "layering" Unity's real time engine on top of RealityKit, Apple's own high level framework (arguably engine) for building AR apps, using Unity's new PolySpatial technology.

PolySpatial puts some restrictions on developers, such as requiring Shader Graph for all shaders and restricting postprocessing, but it enables Unity apps to run alongside other visionOS apps in your environment, a concept Apple calls the Shared Space.

Apps can also in run Full Space mode though, where all other apps will be hidden, including VR apps which Apple calls 'Fully Immersive Experiences'.

Unity apps will get access to visionOS features including the use of real-world passthrough as a background, foveated rendering, and the native system hand gestures.

Apple also has its own Mac-based suite of tools for native spatial app development. You use the Xcode IDE, SwiftUI for user interfaces, and its ARKit and RealityKit frameworks for handling tracking, rendering, physics, animations, spatial audio, and more. Apple even announced Reality Composer Pro, which is essentially its own editor.

Vision Pro will have a "brand new" App Store for immersive apps as well as iPhone and iPad apps compatible with the headset.